About Us

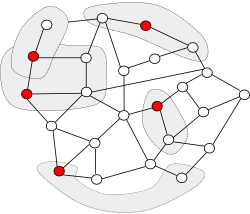

The goal of our research is to build intelligent software systems for automatically enhancing the efficiency and survivability of programs, thereby enabling applications programmers to focus on high-level algorithmic issues. We work in several areas, ranging from restructuring and optimizing compilers to protocols for fault-tolerant computing systems.

Our group is best known for its contributions to automatic parallelism and locality enhancement of programs. These advances are used in software products from Intel, IBM, Hewlett-Packard, Silicon Graphics and Digital Equipment Corporation, among other companies.

We are currently working on exploiting parallelism on multicore systems, and are investigating compiler techniques, runtime support and programming models to make parallel programming more accessible. See the Galois Project for more details.

- Recent Presentations

- see all

- Recent Publications

- see all